With AI on the rise, failed AI projects are becoming increasingly common. About 90% of companies who have invested in Artificial Intelligence (AI) have not seen significant financial benefits from their investments[1]. IBM has deprioritized its Watson technology due to inaccurate recommendations on cancer treatments. Amazon canned an AI recruitment tool after it showed misogynistic biases[2]. These are just two examples of large companies running into challenges. Smaller businesses also find it difficult to get value from AI investments.

Yet companies continue to invest in AI projects. According to IDC, corporate spending on AI systems was over $50 billion in 2020, up from $37.5 billion in 2019. By 2024, investment is expected to reach $110 billion, based on IDC forecasts.

Companies risk failure of their AI projects if they do not approach AI projects differently.

What follows are several key reasons why companies do not achieve the expected return on their investments:

- Approach: Lack of clear strategy and understanding of what is achievable

- Design: Poor model design, data and governance issues and algorithm bias

- Implementation: Limited internal support for full implementation

- Trust: Lack of trust in the insights

- Action: Inability to translate insights to action

When designed well, AI systems can deliver valuable insights into your business operations and revolutionize your approach to driving value for your customers and shareholders.

However, if you don’t plan your AI projects holistically and take systemic action based on the insights, you can waste resources.

Consider this framework to secure ROI from your AI projects:

- Align with Strategic Objectives – Before you invest in any AI projects, start with the business objectives. Work your way to the AI projects or use cases that provide insights to accelerate achieving the strategic objectives for the company. Ensure from the start that you are investing in the right AI projects for the company.

- Design to Predict – Before you build out the analytics model, validate the approach(es) thoroughly so you don’t have to make costly course corrections down the road. Build the models to predict the outcomes you’re looking for. Plan early to build the visualization of the model insights for the audiences that need to take an action.

- Drive Systemic Action – Insight without any action is a wasted effort. Plan on change management to secure buy-in from all levels within the organization. Refine business processes and align training, incentives, and performance management so all relevant operations in the business are aligned to act on the insights.

Now, let’s review each step of this framework.

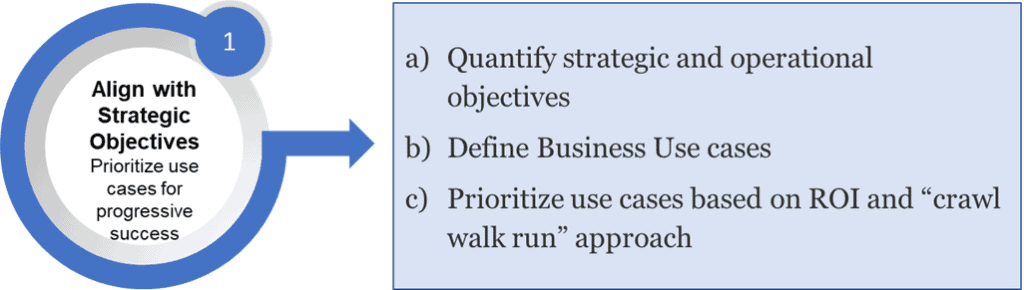

1. Align with Strategic Objectives –prioritize use cases for progressive success

a) Quantify strategic and operational objectives: First define and “quantify” the strategic objectives and break that down to specific operational objectives. “Improve client retention” is a good strategic objective but not specific enough to operationalize, whereas “Improve client retention by 3 points to 78% by end of the year” is quantifiable.

Now, identify the operational objectives that enable you to achieve the strategic objective. “Improve customer satisfaction” could be one of the operational objectives to meet the strategic objective. While that is an operational objective, you can’t hold someone accountable and measure progress. “Improve NPS [Net Promoter Score] by 5 points by end of the year” is a respectable goal.

Apply the SMART approach (SMART goals are Specific, Measurable, Achievable, Realistic and anchored within a Time frame) or a similar approach to quantify the objective, identify owners, determine the baseline and set up specific targets over time.

b) Define Business Use Cases: Break down the operational objectives into potential projects or use cases. An example use case to achieve “Improve NPS (Net Promoter Score) by 5 points by end of the year” could be an AI-based project to identify clients that are likely to be a retention risk (dissatisfied clients). Improving NPS could be one of the projects to help improve retention. An AI model to identify clients that have a high probability to cancel would provide valuable insights. The customer success team can then utilize these insights to pay particular attention to the higher retention risk clients and take proactive steps to mitigate the risk of losing these clients.

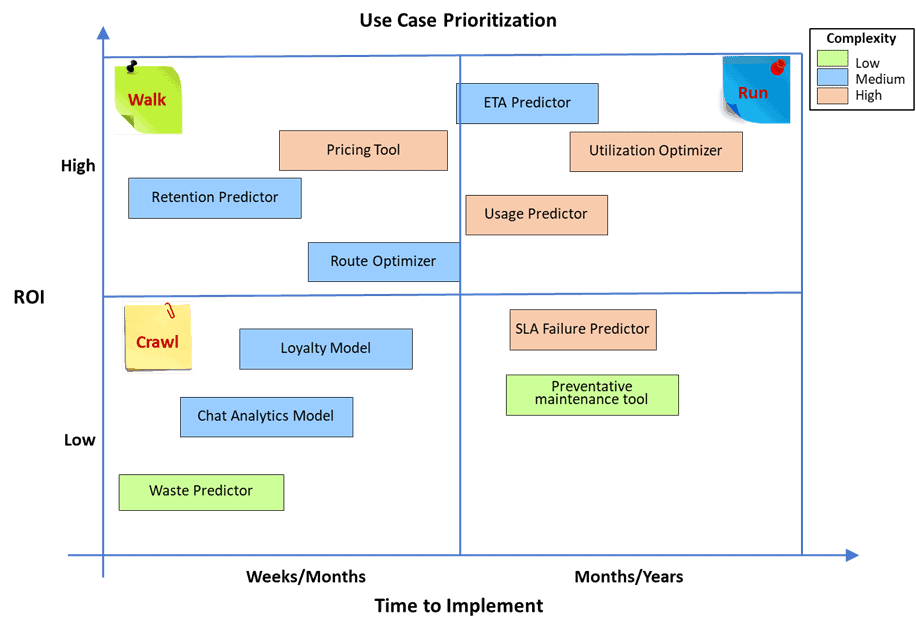

c) Prioritize use cases based on ROI and “crawl-walk-run” approach: Develop an estimate of potential ROI, time to implement and the complexity for each of the use cases. Plot the use cases along a 2×2 matrix with value or ROI on one axis and time to implement on the other axis—highlighting the complexity of the use cases as indicated below.

A low-risk crawl-walk-run approach would mean starting with low or medium complexity use cases on the bottom left quadrant and moving to the top right approach as you begin securing the ROI that could fund subsequent projects.

Ensure you have representatives from all relevant departments and functional areas involved in the development, planning, prioritization, and execution of the use cases. Involving the right representatives every step of the process not only strengthens the alignment with the strategic objectives, but also begins the process of securing buy-in across the organization.

2. Design to Predict – build models to accurately predict desired outcomes

a) Develop a Proof of Concept (POC) to confirm approach: A common mistake is to embark on a large project to build an analytics model before determining if you have enough of the right data or predictors and the right analytical model to predict the desired outcomes.

Start by defining the current process, conduct interviews with the right departments and individuals that impact the outcomes and are impacted by the activities. Determine the types of analyses that could be applied to address this specific use case. Develop a POC (or multiple) to select the best analytical model(s) to predict outcomes.

In determining the data requirements, consider all of the following. Use all available data to develop and test the preliminary models to determine the best predictors and the best model.

- Data within organizational systems that are readily accessible (e.g., CRM, databases)

- Data within the organization that is not readily accessible (e.g., PDFs, pictures, surveys)

- Data outside the organization, or publicly available data (e.g., average income levels by zip code available from data published by the IRS)

Data quality is always a concern given the adage “garbage in, garbage out.” However, poor data quality is not always a deal breaker. For the POC, manual data cleansing and transformations can yield the results and validation necessary before moving on to the next stage.

Utilize the POC to determine the systemic changes that will be required to improve data quality on an ongoing basis. Often, minor changes to existing processes can result in new information that wasn’t originally available.

b) Build and train the analytics model: At the completion of the POC you should have a good idea of the best analytical model for your particular use case and the likely predictors. Now, build the model and apply the required data extraction, cleansing, and cataloguing approaches to prepare the data for the model.

Ensure the models are designed with attention to data and governance requirements while incorporating safeguards to avoid algorithm or design bias. Build and integrate the model into the production systems as appropriate.

c) Develop dashboards for visibility and actions: If you want to take an action based on insights from the analytical models, provide visibility of relevant data and insights to the specific audiences that need to take an action and/or be aware of the changes.

Define KPIs (Key Performance Indicators) that need to be monitored to verify that the actions have been executed based on the insights. Define target metrics for each KPI. Determine the KPI owners across the organization while ensuring the KPIs are disseminated to the right departments and individuals who can impact them.

For example, in order to improve NPS by 5 points by end of the year, the sales, customer success and technical support departments will need to mobilize. Potentially, they will have NPS and other operational targets to meet. Make sure they have visibility into the metrics and are held accountable to the targets so you can achieve the operational goals.

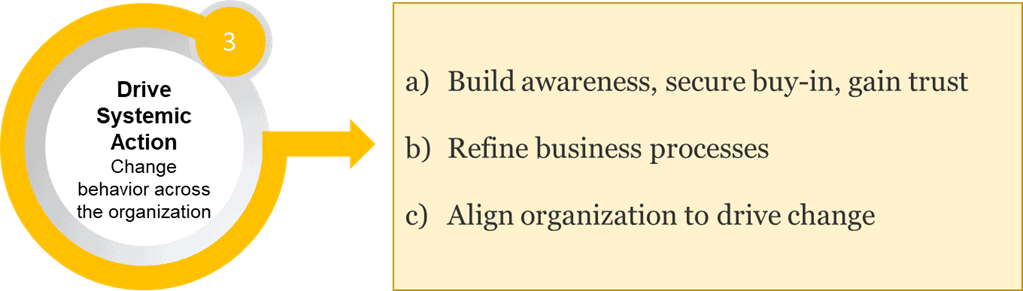

3. Drive Systemic Action–change behavior across the organization

a) Build awareness, secure buy-in and gain trust: While AI systems can generate valuable insights, these insights alone cannot drive value. For that, you need to ensure the right individuals take the right actions. If a customer success agent does not take steps to mitigate retention risk of a particular client—that the model indicates as “high probability to cancel”— the outcome will not change.

Before launching the analytics model to the organization, you need to gain the trust of the individuals. To gain trust:

- Build awareness of the issue being addressed and why is it critical: for the individuals and for the organization

- Outline the actions being taken to address the issue

- Describe how AI fits into the solution and why it is the best approach, while clarifying any misconceptions

b) Refine business processes: Once you’ve done that, define what needs to change in order to achieve the objectives. Knowing which clients are likely to cancel is valuable, now make sure the relevant people are reducing that risk.

To that end, define the required changes to the existing processes and communicate to all relevant departments and individuals. When a client who’s at risk to cancel calls technical support, the team needs to prioritize differently and take particular actions and investments to earn or retain the business. Also, the customer success department may need to proactively understand the client’s concerns and respond accordingly.

c) Align organization to drive change: To drive action from a systemic perspective, you may need to update existing training or provide new training to the relevant employees. Align incentives and performance management systems to focus on high-risk clients.

Customer success managers and their teams must focus attention on high-risk clients to affect the outcome. To impact change, you may need to train the customer success agents to deal with high-risk clients and potentially carve out specific financial incentives to retain them.

The framework will help improve your odds of success by translating insights into sustained, relevant actions. Refine organizational processes and behavior with a robust management system in place to ensure the organization is aligned to achieve its goals. Only then can companies expect to secure the ROI from their AI investments.

[1] Based on a survey of more than 3,000 company managers about their AI spend conducted by MIT Sloan Management Review and Boston Consulting Group.

[2] Forbes article “Companies Will Spend $50 Billion On Artificial Intelligence This Year With Little To Show For It”, Oct 20, 2020

* * *

This is the first installment in Pragmatic Institute‘s series of white papers by Sciata President Harish Krishnamurthy on ensuring ROI from artificial intelligence, transforming insights into action, and driving a cultural change in how your organization leverages data. Read the second piece, “Aligning IT and Business Strategy for Project Success” and the third, “Using AI to Maximize Customer Lifetime Value.”

Learn how Pragmatic can train your data team to deliver critical insights that power business outcomes.

Author

-

View all posts

View all postsHarish Krishnamurthy, with 36 years of expertise in data, has held pivotal roles at Honeywell, McKinsey & Company, A.T. Kearney, IBM, Insight, Spear Education, Sciata, and Pragmatic Institute. With leadership spanning P&L, sales, marketing, and strategy, he has made significant contributions to the field. For questions or inquiries, please contact [email protected].