IN THE HBO SERIES SILICON VALLEY, one of the characters wants to develop an artificially intelligent application to identify food photos taken with your smartphone. To get started, the developer created a more modest application called Not Hotdog. His AI product could only tell if a food was not a hot dog.

He created the application the way that most AI products are developed. With access to huge numbers of pictures of hot dogs on the internet, they used machine learning—inputting data into a machine and allowing it to look for matching patterns.

In this case, the developers fed hundreds of thousands of pictures of hot dogs into the machine. The machine would turn the images into binary patterns and “learn” what it means to be a hot dog.

The more pictures of hot dogs it sees, the smarter it gets at identifying something new. That means it will still be able to make the identification even if it sees something it hasn’t seen before—like a Chicago-style hot dog or the vile Cincinnati hot dog. In this sense, it’s learning.

In machine learning (ML) language, this is a standard supervised ML data-classification problem. It’s supervised because the developer started with a “training set” of thousands of images that the developer “labeled” as containing hot dogs. It was a classification problem because the developer was trying to classify two groups of images: one group that contained hot dogs and one that did not.

In the end, the investors in the application weren’t impressed. They wanted a product that could identify all foods. All they got was something that could show you when something was not a hot dog.

These investors didn’t recognize that creating an application like that would have taken years of effort with huge amounts of data. The developer had to begin somewhere, so Not Hotdog was a good place to start. But it seemed silly and useless to the investors, so they passed.

The Not Hotdog Rule

The developer was still able to turn around and sell it to Instagram for several million dollars. It turned out to be a more accurate way to detect if men were posting the wrong kind of selfies.

There’s a lesson here for AI product managers and marketing specialists. It’s not just about learning AI technology and the terms. Instead, you have to change the way you think about delivering products. Think of it as the Not Hotdog Rule.

The Not Hotdog Rule is that the road to interesting AI products is often paved with the stepping stones of silly or useless products.

There was no way the Not Hotdog developer knew that this would be the fate of his ML product. What he created was silly and useless, but in the end, it evolved into something extremely valuable. There was no way he could see this as a stepping stone to Instagram. It was only visible once he got further down the path. The Not Hotdog Rule isn’t just limited to fictional examples.

Consider:

- The Google Brain project was focused on identifying cats from millions of photos on the internet. In a sense, it was an AI “not cats” product.

- The DeepMind project started by having a deep neural network teach itself how to play decades-old Atari 2600 games.

- Researchers at Microsoft taught an ML neural network how to identify humor in New Yorker cartoons.

Each of these AI “products” would send shivers down the spine of even the most open-minded product manager. Yet top AI companies like Microsoft and Google invest millions into their development.

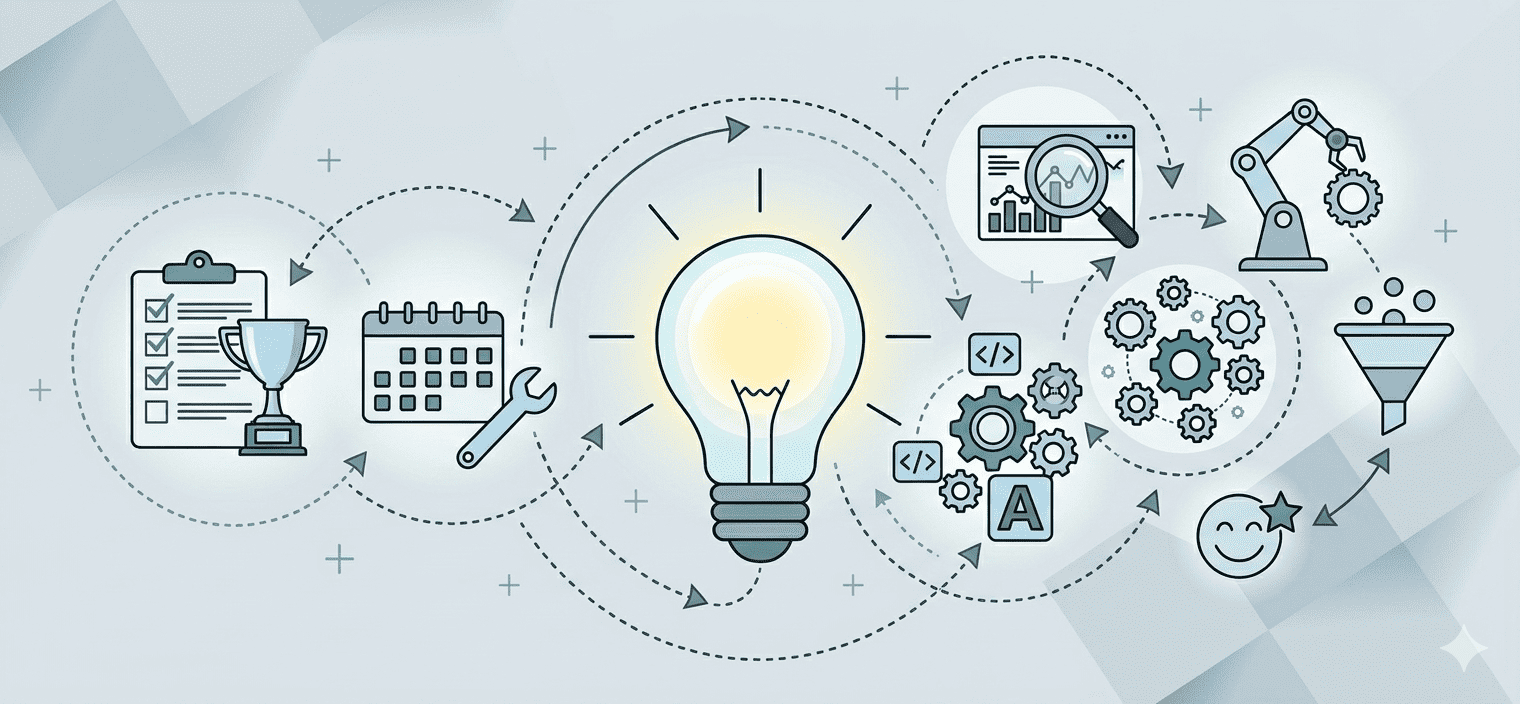

They do this because they recognize that innovation isn’t a straight line. That developing products with ML is more like science than manufacturing. That sometimes the most brilliant ideas are built on a mountain of silly or useless stepping stones. You need a lot of “not products” on the way to discovery.

These ideas have the potential to become a step to something more valuable. The challenge with this type of discovery is that you don’t see all the stepping stones until you’re further down the path. If you look at every step as a “product,” you’re going to miss out on the discoveries you need to develop something really valuable.

Manufacturing still heavily influences most product management. There’s a lot of emphasis on planning, requirements, forecasting and lifecycle management. This type of mindset makes it really difficult to develop these AI products. It assumes that each block stacks on top of the other in a linear and predictable way. Machine learning products don’t usually follow this straight line, though.

I once worked for a credit card–processing organization that was using ML to come up with targeted promotions. The model was not much of an improvement at delivering promotions. But the team discovered that it was excellent at predicting when a customer might have trouble paying his bill. They used this model to develop a completely new product that helped credit card companies take proactive steps to make sure customers were spending wisely.

If the company had focused solely on its objective of delivering promotions, it would have missed the opportunity to develop a new product. In a sense, the promotions were a stepping stone to this new product. But the path to this new product only became visible when they looked backward at the previous steps. Their “not” product ended up being much more valuable as a way to identify distressed customers.

A Scientific-Method Mindset

While this mindset is uncommon in most organizations, it’s not that unusual in scientific fields. In many scientific fields, you might have discoveries that aren’t apparently valuable that become part of a larger breakthrough. This is all part of the scientific method.

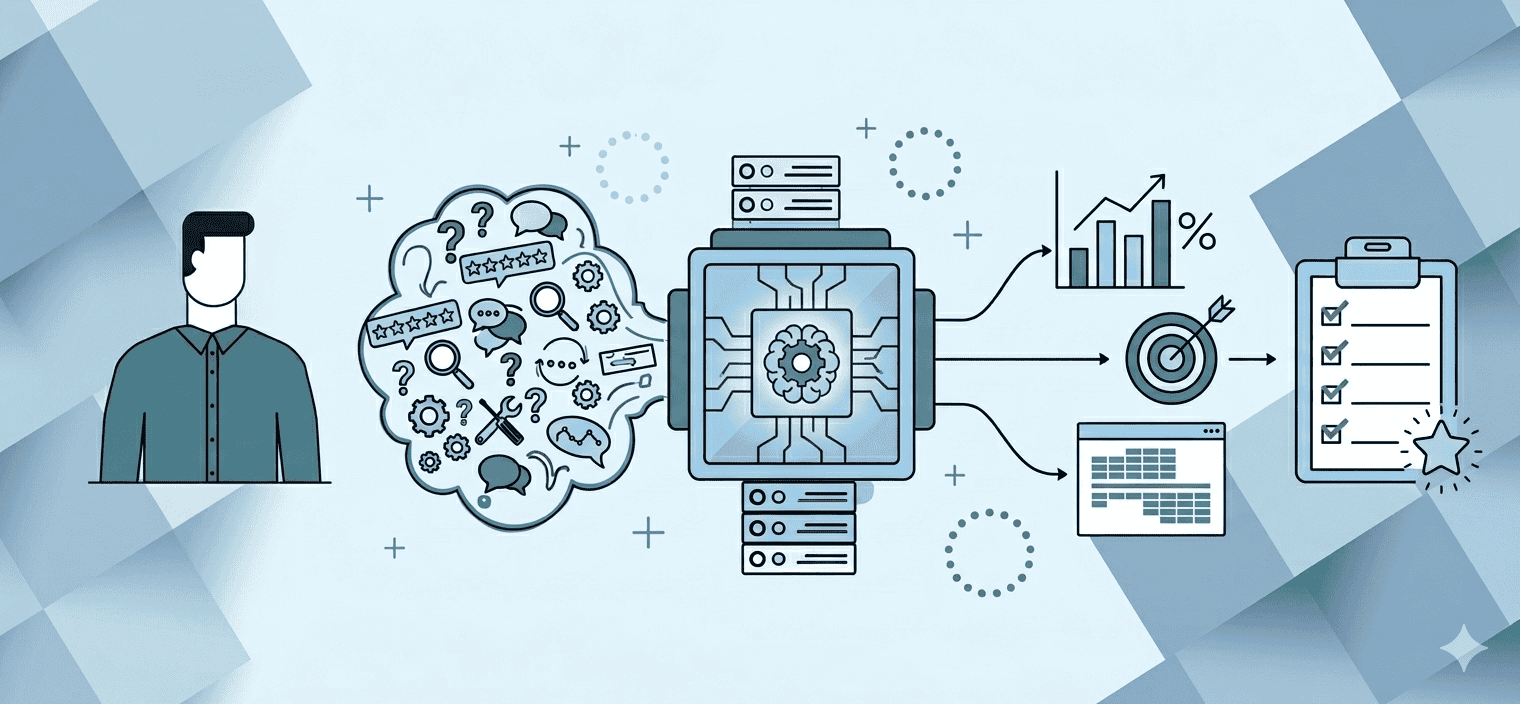

The scientific method starts with a question, typically called a hypothesis. Then you have to use empirical evidence to try to prove (or disprove) the hypothesis. That’s why you see scientists running several small experiments. They might use the results from these experiments to create evidence for or against their hypothesis. Or they might use the results to ask an entirely different question.

In the past two decades, software developers have embraced this mindset when delivering products. One of the most popular is the scrum framework. It encourages your team to inspect and adapt and work on short-term goals instead of long-term objectives. You don’t want your team focused on long-term objectives at the expense of short-term discoveries.

Machine learning products push this challenge even further. That’s why, like scrum, it makes sense to rethink some of the key roles and processes that you use to deliver products.

Organizations can get a lot of value from working in smaller teams that embrace a more scientific mindset. I’ve tried a few different approaches, but what I found is that having small teams with well-defined roles leads to the best discoveries.

The ideal team has three people:

- A data analyst to crunch the data and use machine learning tools to turn out reports and compelling visualizations

- A knowledge explorer to come up with interesting questions. They can help the team come up with small products that might not have immediate value, but could lead to further discovery

- A product manager who communicates these discoveries to the rest of the organization and solicits feedback

This team could work like an engine of discovery, churning out smaller “not” products so that your organization has enough stepping stones to create paths to something more valuable.

Stumbling Upon Ideas

Establishing the team is only the first step. The real challenge is allowing them to explore your data in a way that might deliver small, useless products, to let them make their own Not Hotdogs.

That might seem counterintuitive. Most organizations strive to exceed their customers’ expectations. They come up with products that have clear objectives and then work to deliver those objectives. That approach works well if you are delivering running shoes or home appliances. But when you start working with AI products, this mindset starts to get in the way.

The reality is that your small teams will learn a lot more about your data and your customers if you remove these objectives and expectations. They will get a sense of what AI tools work the best with your data. They will use machine learning to stumble upon insights and meander into valuable products.

You won’t be able to plan out your prototypes because, in a sense, you need to build your prototypes to figure out what it is you need to build a valuable product. With enough Not Hotdogs, you’ll increase the chances that you can build on them or combine them in new and unpredictable ways.

To get real value from your AI products, you have to let go of the idea that everything your team produces should be valuable. To paraphrase Jedi Master Yoda, you have to “unlearn what you have learned” about product management. If you focus on your objectives, you might miss out on your best discoveries.

So don’t think of artificial intelligence and machine learning as new types of technology to use in your products. Instead, rethink your mindset around what it means to deliver products. Let the teams find the Not Hotdogs in your organization. In the end, it might be what you’re not looking for that ends up being the next big thing.

Author

-

View all posts

View all postsDoug Rose, a professional with 27 years of expertise, specializes in organizational coaching, training, and change management. Having contributed to organizations like Sprint Nextel and LinkedIn, Doug is a transformative force. For questions or inquiries, please contact [email protected].