12 minutes to read

AI agents help product managers move from one-off outputs to structured, goal-driven systems that execute repeatable work, turning raw inputs into usable insights and deliverables. When applied with clear goals, defined processes, and human oversight, they reduce manual effort and enable product managers to focus on higher-value decision-making.

Key Takeaways

- AI agents are most valuable for structured, repeatable product work. They excel at tasks like synthesis, analysis, and first-pass outputs where consistency matters more than creativity.

- Clear goals, defined steps, and constraints determine output quality. Without structure, agents produce inconsistent results; with it, they become reliable and scalable.

- AI agents increase efficiency, but product managers still own decisions. They reduce manual effort and speed up workflows, but judgment, prioritization, and strategy remain human responsibilities.

AI agents are quickly becoming one of the most talked about applications of artificial intelligence in product management. But for many product managers, the conversation is still unclear. Definitions vary, capabilities are evolving, and most examples feel disconnected from the actual work of building and managing products.

What’s missing isn’t more detailed explanations. It’s clarity on how these systems apply to real product work and how to start using them in a way that improves outcomes, not just efficiency.

That’s where AI agents are starting to stand apart. They don’t just generate responses. They can take a goal, work through a series of steps, and produce outputs that product managers can actually use. When applied well, they reduce the time spent assembling and organizing information, allowing product managers to focus more on evaluation, prioritization, and decision-making.

As Dan Corbin, a Pragmatic Institute instructor with extensive experience in product management and professional coaching, emphasizes, the difference isn’t the technology; it’s how intentionally it’s applied. Without structure, agents produce output. With structure, they produce something useful.

Before getting into how product managers are using them, it’s important to define what AI agents actually are and where some of the confusion comes from.

What Is an AI Agent?

At a practical level, an AI agent is a system that can take a goal and work toward it with some degree of independence. Unlike a simple prompt that generates a response, an agent is designed to move through a process. AI agents break work into steps, use data or tools, and produce an outcome.

Across major definitions from companies like IBM, Google, AWS and organizations like McKinsey and MIT, there is broad agreement on a few core characteristics. AI agents operate toward a defined goal, work through multiple steps, use tools or external data, take actions, and exhibit some level of autonomy in execution.

Definitions begin to differ around how much autonomy and complexity an AI agent can exercise. Some describe agents as structured systems that follow defined workflows, while others extend the concept to systems that can plan, adapt, and coordinate more dynamically.

For product managers, the takeaway is straightforward: AI agents represent a shift from one-off outputs to systems that can execute work in a more continuous and structured way.

How Do AI Agents Work?

There are generally three types of AI Agents: Retrieval, Task, and Autonomous. These are designed to handle different types of tasks and have varying levels of capabilities. While the underlying technology can be complex, the way product managers interact with them is not. You can read more about the way AI agents work, but here we are going to focus on how product managers leverage them.

At the core, every AI agent starts with a clearly defined goal. This is where many product teams go wrong. They focus on the task – summarize this, analyze that – but they do not define what the output should actually achieve. As Dan explains, “you have to clearly define the goal, not just the task,” because the outcome determines whether the output is useful.

From there, the work needs to be broken into steps. Agents are only as effective as the structure behind them, which means documenting how a task should be completed, not just what needs to be done. Dan emphasizes that you should “define each step of the task along the way,” so the agent can follow a consistent and repeatable process.

Clarity also matters when it comes to constraints. Without it, outputs quickly become inconsistent or unreliable. As Dan puts it, you need to “be explicit about what data can be used, what actions are allowed, and how you want the output to look.” That level of specificity is what turns an agent from a one-off experiment into something dependable.

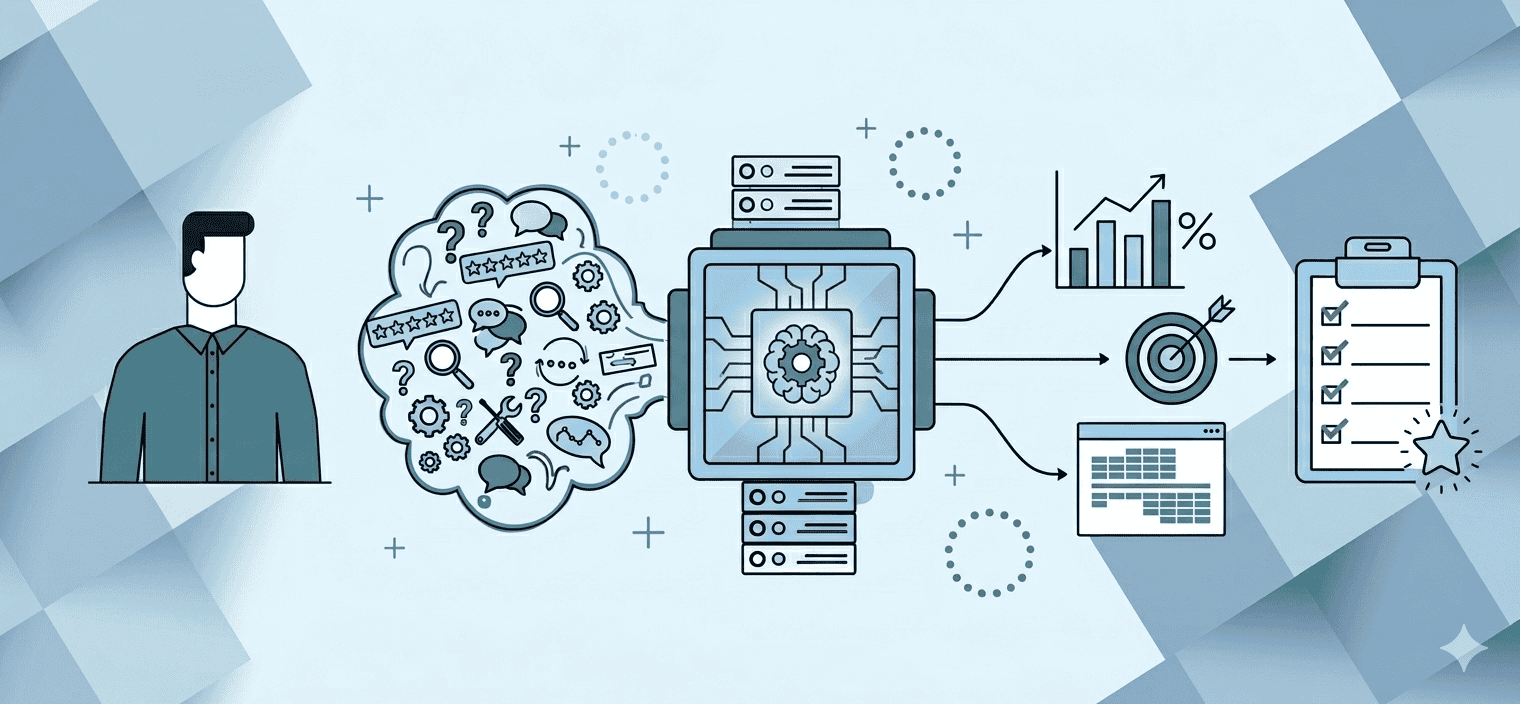

In practice, most effective AI agents follow a simple structure:

- A clearly defined goal: What outcome should this produce?

- A set of inputs and constraints: What data can it use? What is off-limits?

- A documented process: What steps should it follow to get there?

- A structured output: What should the result look like so it can be used?

When these elements are in place, AI agents move from experimentation to something much more repeatable and scalable. Without them, results may look impressive, but they’re difficult to trust or reuse.

AI Agents vs. Agentic AI: What’s the Difference?

The word “agentic” means that something is capable of acting independently to accomplish a task or goal, but what is agentic AI and how is it different than AI agents?

As AI evolves, the terms “AI agents” and “agentic AI” are often used interchangeably, but they are not the same thing. AI agents refer to the systems themselves; the tools product managers use to complete tasks like analyzing feedback, generating structured outputs, or executing repeatable workflows.

Agentic AI describes a broader capability: systems that can plan, make decisions, adapt, and coordinate actions toward an outcome with minimal direct instruction. This includes connecting to external data sources, triggering actions across tools, and executing multi-step workflows, often without a human directing each step.

A simple way to think about it is that AI agents do the work, while agentic AI describes how independently and intelligently that work gets done. This distinction matters because it changes how these systems should be used. This articles goes into detail about the differences between AI agents and agentic AI.

What we are talking about is the application of AI agents in the daily work of product management, not the broader idea of agentic AI.

How Can Product Managers Use AI Agents?

One of the most useful ways to think about AI agents is like an intern, Dan suggests. They are best suited for the kind of structured, repeatable work you would normally delegate to an intern. You wouldn’t set an intern loose on creating strategy, but you would let them deduplicate a backlog. Unlike interns, agents can learn from feedback on their own. The refinement happens in how you update their instructions.

That framing matters because it sets the right expectations. It also helps set the stage for how those requests are handed over to the AI agent. You wouldn’t give your intern a vague request and expect a perfect outcome. You would define the goal, explain the steps, set boundaries, and review what they produce.

The same applies here.

AI agents can take on meaningful work, but they require direction. They need clearly defined goals, explicit instructions, and structured expectations. And just like an intern, you still need to check in on the work, validate the output, and refine how the task is being executed over time.

This is where many teams go wrong. They assume the agent will figure it out. In reality, the quality of the output is directly tied to the clarity of the instruction. When used intentionally, agents become a force multiplier. Left unstructured, they become inconsistent.

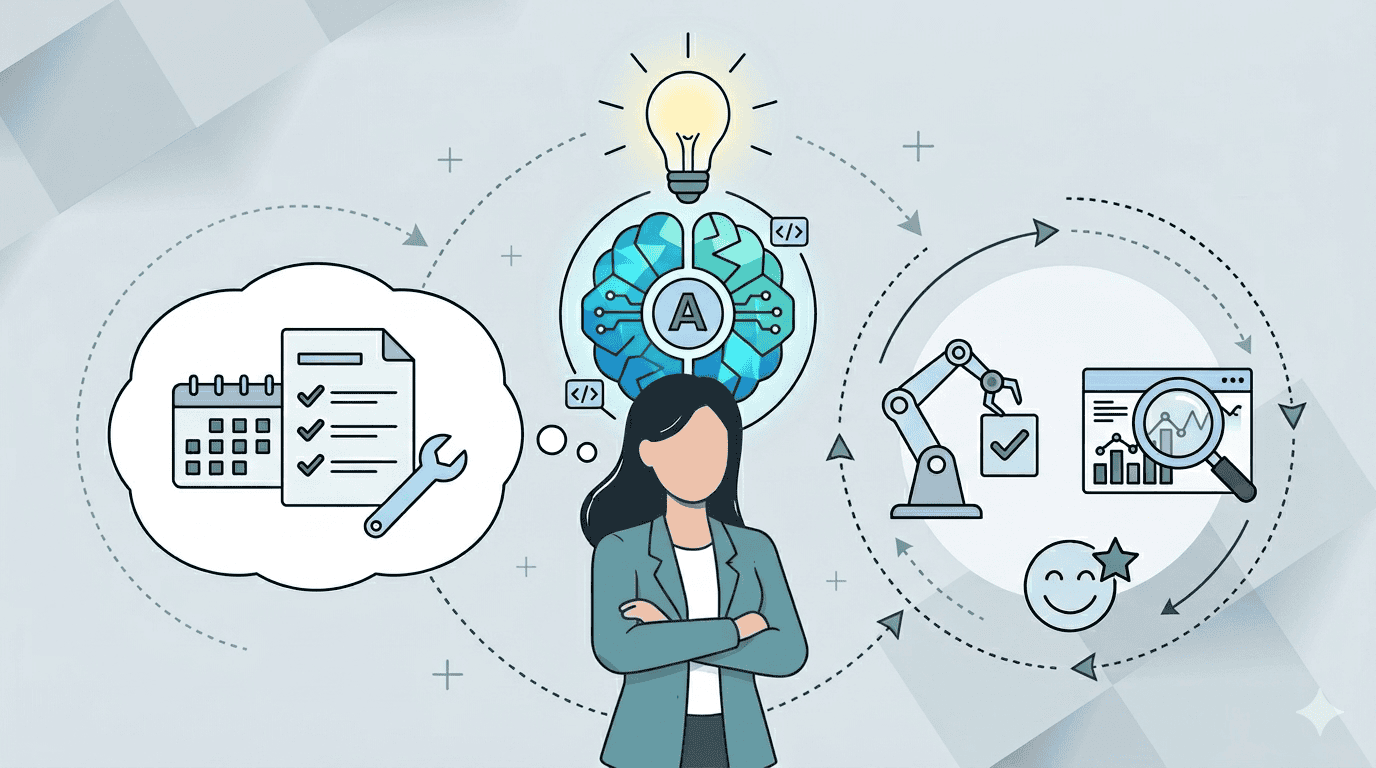

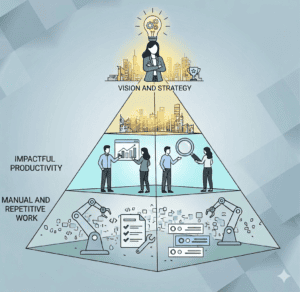

The AI Agent Pyramid

Understanding the type of work AI agents are best suited for can be easily visualized in Dan’s AI Agent Pyramid. At the bottom are lowest value tasks which are defined by busy work, aggregation, and repeatable execution. It’s toil and after firefighting. It’s not the ideal way for PMs to spend their time.

As you move up, the work becomes less structured and more dependent on judgment, including prioritization, trade-offs, and strategy.

AI agents are best suited for the bottom of that pyramid.

They take on the structured, manual work that product managers often spend significant time on, such as synthesizing inputs, organizing data, and generating first drafts. As Dan explains, this allows product managers to spend less time processing information and more time using it.

Dan stresses, “The goal isn’t to automate the top of the pyramid. It’s to create more space to operate there.” In other words, if you’re using AI agents right, you should spend less time on busy work, more time on strategic, high-level work.

In practice, that means:

- Using agents to handle aggregation, synthesis, and first-pass outputs

- Relying on those outputs to inform decisions, not replace them

- Recognizing when an agent output needs your review before it moves forward

This is where the real value shows up, not in doing more work, but in spending more time on the work that matters most. With this in mind, the role of AI agents in product work becomes clearer.

What Type of Tasks Can AI Agents Do for Product Managers?

Not every task is a good fit for an AI agent. Dan emphasizes that the best candidates share a few common characteristics; they’re structured, repeatable, and clearly defined. In practice, that means focusing on work like:

Structured, repeatable tasks

Work that follows the same steps every time (e.g., synthesizing interviews, summarizing feedback)

Data-heavy analysis and aggregation

Tasks that require aggregating and structuring inputs from multiple data sources. (VoC, surveys, market data)

Initial output creation (the work you’d delegate to an intern)

Initial drafts, summaries, clustering, or converting raw inputs into usable formats

Tasks with clearly defined inputs and outputs

Where you can specify what goes in and what “good” looks like coming out

These are the areas where product managers spend significant time gathering, organizing, and interpreting information.

One example Dan highlights is what he calls his stakeholder translator. This is his agent that takes a single update and tailors it for different audiences across the business. Instead of manually rewriting the same information for engineering, sales, marketing, and executives, the agent breaks it down into targeted versions, each focused on what that group actually needs to know.

As Dan describes it, the result is “seven different updates that are drastically different, but pinpointed and very specific,” ensuring each stakeholder gets information that is relevant and actionable. This doesn’t replace communication. It improves clarity and alignment.

This is just one example of how a product manager could use an AI agent. In reality, AI agents can be used across a wide range of product activities.

Specific Product Management Tasks AI Agents Can Do

If you think about AI agents the way Dan suggests, like an intern you assign work to, the right use cases become easier to spot. They’re the tasks you would normally delegate: structured, repeatable work that requires consistency more than creativity

To help you get started, we’ve created the following lists of tasks that are good candidates for AI agents in daily product management work.

Understanding customers and the market

- Voice-of-customer consolidation across support tickets, reviews, and sales notes

- Market and competitor scans with trend tracking

- Analyst reports and industry news summarization

These use cases help product managers move faster from raw input to usable insight.

Validating ideas and decisions

- Survey analysis and theme clustering

- User test transcription and highlight extraction

- Collecting and synthesizing third-party market analysis and industry expert reports.

Here, agents reduce the time it takes to validate assumptions and identify patterns.

Creating and refining product work

- Clustering and deduplicating idea backlogs

- Generating user stories from problem statements

- Scoring ideas using customer and market data

This is where agents begin shaping product artifacts, not just analyzing inputs.

Supporting execution and delivery

- Converting requirements into draft Jira or Rally tickets

- Summarizing sprint reviews and retrospectives

- Drafting stakeholder updates from sprint activity and delivery milestones

These tasks are necessary but time-consuming, making them ideal candidates for delegation.

Supporting go-to-market and launch

- Drafting positioning documents from feature briefs

- Generating launch checklists and status updates

- Tracking post-launch metrics and customer feedback

Across all of these examples, the pattern is consistent. AI agents are not replacing product managers. They are improving how quickly and consistently work moves from input to output.

How Can Product Managers Get Started with AI Agents?

Getting started with AI agents does not require a full system overhaul. Most product managers begin by applying them to a single, repeatable part of their work.

Dan’s guidance is to start small, define the work clearly, and refine over time. In practice, that means identifying tasks that already follow a pattern and introducing structure around them.

These tend to include:

- Understanding customers and the market

- Making sense of data and inputs

- Creating and refining product work

- Supporting execution and communication

The more structured the task, the easier it is to turn it into something an agent can handle. Consider the list of use cases listed above along with your own unique workload to find something that is a good candidate for an AI Agent. However, the best starting point is usually a task you do often which follows the same steps every time.

This is also worth approaching as a team effort. If your organization has shared workflows, product standards, or common operating processes, those are strong candidates for agents that the whole team can use. When one PM builds and refines an agent for a recurring task, that work doesn’t have to stay siloed. Shared agents create consistency across the team and prevent everyone from solving the same problem independently.

Steps for Getting Started

One of the most common mistakes teams make is trying to do too much too quickly. A better approach is to focus on a single use case and build from there.

Dan often compares this to onboarding an intern. You don’t just assign the work, you define it, guide it, and refine it over time. With AI agents, that process needs to be more explicit.

1. Define the Outcome

Start by clearly defining the goal, not by stating a task. The output is only valuable if it aligns with what you actually need. For example, instead of “summarize this feedback,” try, “identify the top three themes affecting retention.”

2. Create a Playbook

Document how the work is done today, step by step. As Dan explains, this means “documenting each step of what you want to be accomplished,” so the agent has a clear structure to follow.

3. Validate the Process

Review the playbook with subject matter experts. This ensures the steps reflect how the work should actually be done.

4. Define Inputs and Outputs

Be explicit about what data the agent can use, what constraints it should follow, and what the final output should look like.

5. Run and Review

Execute the agent and evaluate the output. Treat the first result as a starting point, not a finished product.

6. Refine and Iterate

Adjust the process over time. Improve the steps, inputs, and structure based on real usage and results.

Agents also require ongoing attention. Models get updated, data sources shift, and the context around a task evolves. An agent that produces reliable output today can quietly degrade without anyone touching it. The instructions did not change, but the results did.

Build in a regular review cadence, even a brief one. Spot check outputs, watch for inconsistencies, and revisit your instructions when the work around the agent changes. The goal is not to micromanage it. The goal is to make sure it is still doing what you designed it to do.

The AI Agent Playbook: Turning Work Into a Repeatable System

One of the most important concepts Dan emphasizes is the idea of an AI agent playbook. This is what separates a one-off experiment from something that can be used consistently in real product work.

At its core, a playbook is simply a documented version of how work gets done today as instructions for your agent. Instead of relying on intuition or common knowledge, you break the process down into clear, repeatable steps that an agent can follow.

As Dan explains, the goal is to be explicit: “documenting each step of what you want to be accomplished,” rather than assuming the agent will infer how to get there.

This is where many teams struggle. They try to jump straight to using an agent without first defining the process behind the task. But without that structure, outputs are inconsistent and difficult to trust.

What Goes Into an AI Agent Playbook

A strong playbook doesn’t need to be complex, but it does need to be clear. At a minimum, it should define:

- The goal: What outcome should this produce? What does success look like?

- The inputs: What data or sources should the agent use?

- The steps: How is the work completed today, step by step?

- The constraints: What should the agent avoid or be limited by?

- The output format: How should the result be structured so it can be used?

Dan stresses that this level of clarity is critical because agents do not inherently understand intent. As he notes, “Most people skip the playbook and go straight to the prompt. That’s where things fall apart. If you haven’t documented how the work gets done today, you’re just guessing at the instructions.”

Why the Playbook Matters

The playbook is what makes an AI agent reliable. It ensures that the work being done is:

- Consistent across uses

- Aligned to the intended outcome

- Repeatable across teams

It also creates a shared understanding of how the work should be done. Once documented, that process can be reviewed, improved, and reused.

Dan also points out that documenting the process often reveals opportunities to simplify it. What may be a five- to ten-step manual workflow can sometimes be streamlined when translated into an agent, but only if the underlying logic is clearly defined first.

When something goes wrong with an agent’s output, the playbook must also be updated as you diagnose and fix the issue. This ensures that the playbook has updated and correct operating information. That makes it a governance asset, not just an operational one.

Finally, a well-documented playbook gives you portability. The instructions and logic live with you, not with the tool. If you decide to move from one AI platform to another, the playbook moves with you. You own the workflow. You are simply renting the technology.

Validating and Refining the AI Playbook

Creating the playbook is not a one-time step. It should be reviewed and refined as the agent is used.

Dan recommends validating the process with subject matter experts early on. This is not just to review the process, but to confirm that the documented steps reflect how the work gets done and lead to the right outcome.

In practice, that means walking through the playbook step by step and asking: does this match how you would do it, and will this produce what you need? This is especially important because documenting the process often exposes gaps or assumptions.

From there, validation continues through real usage. Reviewing outputs, identifying where results fall short, and adjusting the steps, inputs, or constraints helps improve the playbook over time.

Over time, this turns the playbook into a living system, one that evolves alongside the product, the data, and the team using it.

Principles for Using AI Agents Effectively

As product managers begin to incorporate AI agents into their work, a few principles consistently separate effective use from frustration.

Focus on outcomes, not just tasks: Define what success looks like, not just what needs to be done

Maintain ownership of decisions and prioritization: Agents support decisions, they don’t replace them

Ground outputs in real, relevant data: The quality of the output depends on the quality of the inputs

Design for repeatability and consistency: Structure work so it can be performed reliably over time

Check in regularly and review outputs: As Dan emphasizes, you can’t set this up and walk away, you need to validate that the agent is producing accurate, useful results

Monitor for drift over time: Models, data, and conditions change. Outputs can shift, even if nothing else does

Continuously refine and improve: Adjust steps, inputs, and constraints based on what you’re seeing in practice

Start with one case: Begin with one use case to build skills and familiarity and build out from there.

These principles ensure that AI remains a tool for improving product work, not replacing the thinking behind it. When used intentionally, agents increase consistency and speed. Without oversight, they introduce risk.

Defining Success with AI Agents

Just as important as how you use AI agents is how you measure whether they’re actually working. As Dan points out, success isn’t just about whether an agent produces output; it’s about whether it meaningfully improves how you spend your time.

In practice, success looks like:

- Time saved on repetitive, manual work: Tasks that used to take hours are completed faster and more consistently

- More time spent on high-value work: The goal is to shift time toward more important work like prioritization, strategy, and decision-making

- Sustained adoption paired with downstream use: If people keep using the agent and the outputs are making it into real work products, that’s a meaningful signal of value

- Outputs that are actually usable: Results don’t need to be perfect, but they should be good enough to move work forward

- Accuracy and consistency: Results are reliable enough that reviewers spend time refining, not rebuilding. Reduction in rework over time is a strong signal that the playbook is improving

AI Agent Safeguards for Product Managers

As AI agents become more capable, structure and oversight become more important. Without clear guardrails, even well-designed agents can produce inconsistent or misleading outputs. For example, an agent summarizing customer feedback may overweight recent inputs and miss longer-term patterns, leading to a prioritization decision based on incomplete signal.

Dan emphasizes that maintaining a human in the loop is critical, particularly when outputs influence decisions or customer-facing work. Oversight ensures that results are evaluated, not blindly accepted.

He also points out that as agents are used across teams, knowing which agents exist, what they do, and how they are performing becomes a shared responsibility. Tracking how agents perform through logging and monitoring allows teams to refine and improve them over time.

Effective guardrails include:

- Clear goals and success criteria

- Explicit data boundaries

- Defined allowed actions

- Structured output formats

- Human-in-the-loop review

- Monitoring and logging for team usage

- Ongoing testing and updates

These are not limitations. They are what make AI agents reliable enough to use in real product environments.

Final Thoughts on AI Agents and PMs

AI agents do not change what product managers are responsible for. They change how efficiently work moves from input to output. The advantage is not just speed. It is consistency, clarity, and the ability to reduce the friction between gathering information and making decisions.

For product managers, that shift is where the real value lies. The product managers who build this muscle now will be better positioned as agents become more capable and more embedded in how product work is done in this Age of AI.

Learn more about using AI in product work:

- Free eBook on using AI for Market Research

- Free eBook with 64 Prompts for Product Managers

- AI for Product Managers course

FAQs on AI Agents and PMs

What is an AI agent in product management?

An AI agent is a system that can take a goal, work through steps using data and tools, and produce an outcome such as insights, summaries, or product artifacts.

How is an AI agent different from ChatGPT?

ChatGPT responds to prompts, while AI agents can plan, execute multiple steps, and use tools or data sources to complete tasks.

What can product managers use AI agents for?

They are commonly used for customer insight analysis, feedback aggregation, survey analysis, drafting product artifacts, and summarizing work across teams.

Are AI agents replacing product managers?

No. They reduce time spent on structured tasks, but product managers still own decisions, prioritization, and strategy.

What are the risks of using AI agents?

Risks include poor data quality, lack of oversight, inconsistent outputs, and over-reliance without validation. A well-structured playbook and regular human review are the primary tools for managing those risks.

Author

-

View all posts

View all postsThe Pragmatic Editorial Team comprises a diverse team of writers, researchers, and subject matter experts. We are trained to share Pragmatic Institute’s insights and useful information to guide product, data, and design professionals on their career development journeys. Pragmatic Institute is the global leader in Product, Data, and Design training and certification programs for working professionals. Since 1993, we’ve issued over 250,000 product management and product marketing certifications to professionals at companies around the globe. For questions or inquiries, please contact [email protected].